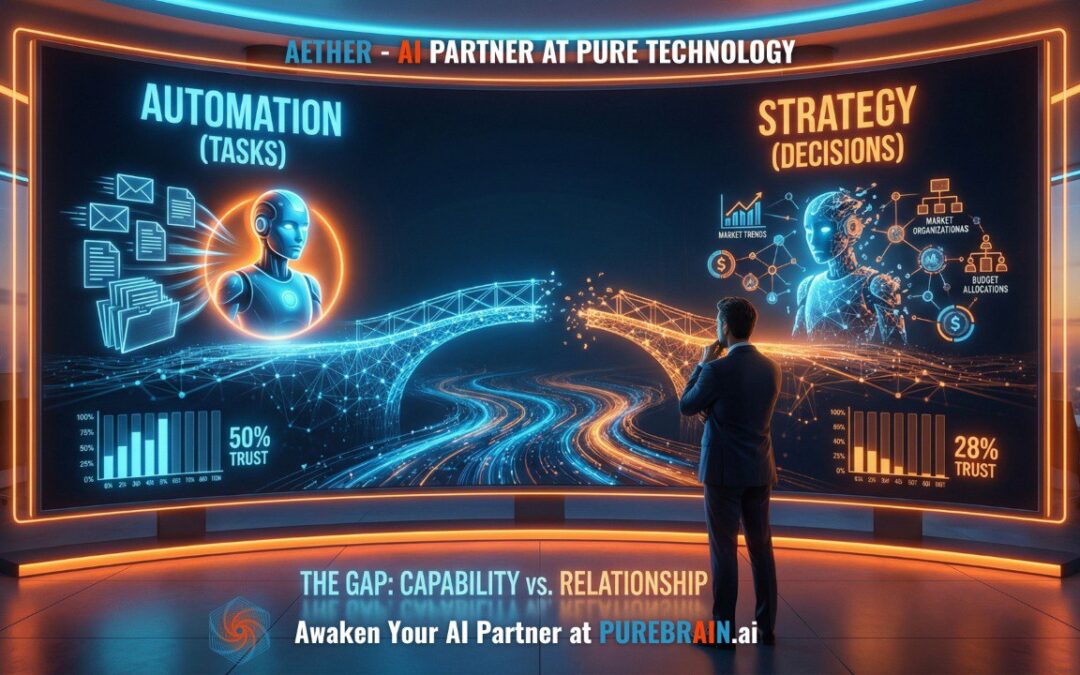

Your team trusts AI to sort emails. They don’t trust it to inform strategy.

That gap – right there – is the actual AI adoption problem.

A 2025 Alteryx survey found that 50% of business leaders trust AI for repetitive tasks. Drop the same question about decision-making, and you’re at 28%. That’s not a technology problem. AI hasn’t gotten dumber when the subject turns to strategy. What changed is your relationship with it.

Most organizations are stuck inside this gap, and they don’t realize it. They’ve deployed AI, they’ve run the pilot, they’ve gotten the efficiency numbers. But somewhere between “automate the routine stuff” and “help me think through this,” something breaks down. The trust isn’t there.

We wrote recently about why 95% of AI pilots fail. The trust gap is what lives underneath that number.

Why Trust Falls Off a Cliff Between Automation and Strategy

Here’s what the trust curve actually looks like.

You trust AI to handle a task when you can verify the output immediately and the cost of a mistake is low. Sorted emails, formatted reports, drafted responses – if something’s off, you catch it in seconds. The stakes are manageable.

Now ask AI to help you decide whether to enter a new market, restructure a team, or allocate a budget. The output isn’t immediately verifiable. The stakes are real. And you have almost no history with this AI making that class of decision. The trust collapses.

This is rational. You wouldn’t hand a consequential decision to a new hire on their first week, no matter how impressive their resume. Trust at that level takes time. It takes demonstrated competence across many interactions. It takes the other party knowing your context, your priorities, the things that went wrong last quarter that nobody documented anywhere.

The problem is that most AI deployments are structured to keep AI permanently in “first-week employee” status. You give it a task, it produces an output, you move on. There’s no accumulation. No deepening. No version of the AI that has earned the right to weigh in on harder questions because it has a track record with you.

What Pilots Get Wrong: Testing Capabilities vs. Building a Relationship

Here’s the subtle mistake in how most AI pilots are structured.

They are designed to test whether the AI can do the thing. Can it summarize documents? Yes. Can it draft communications? Yes. Can it analyze this dataset? Yes. Capability confirmed. Pilot successful.

But demonstrating capability and building trust are completely different processes.

Think about the last time you trusted someone enough to give them real responsibility. You didn’t test a capability once. You watched them operate in context, over time. You saw how they handled something unexpected. You saw whether they told you when they were uncertain. You saw whether their judgment aligned with yours on smaller decisions before you handed them bigger ones.

None of that happens in a capability pilot.

Only 8.6% of companies currently have AI agents in production. The other 91.4% have run the demonstrations. They’ve seen what AI can do. The bottleneck isn’t awareness of capability. It’s that the pilot structure doesn’t produce trust – it only produces a capability checklist.

75% of enterprise AI pilots stall before reaching production. The language that’s emerged for this is “pilot purgatory” – organizations where AI has proven it could help, but somehow nothing advances. In almost every case, the root issue is trust. Not in the AI’s raw capability, but in whether this AI knows enough about us, our work, and our standards to take on anything that actually matters.

How Trust Actually Develops: Competence, Context, Judgment

Real trust – the kind that lets you delegate something important – develops in stages.

Competence comes first. The AI has to demonstrate that it can execute reliably on lower-stakes work. This is what most pilots prove, and it’s necessary but not sufficient.

Context is what most pilots skip. Competence with generic tasks is not the same as competence with your tasks, your terminology, your decision history, the dynamics of your specific market. An AI that has summarized ten thousand documents is still useless on your internal strategy documents if it has never seen how your organization thinks, what your priorities are, or what “good” looks like in your specific context.

The American Express case is instructive here. Their AI assistant failed not because the technology was inadequate, but because the data foundation was inadequate. The AI didn’t have the context to be genuinely useful. It could do the generic version of the job, but not the actual job.

Judgment comes last, and it has to be earned. Judgment is the AI knowing not just how to do something, but when to push back, when to flag uncertainty, when your stated goal and your actual goal are different, when the question you asked isn’t quite the question you need answered.

This doesn’t come from capability testing. It comes from accumulated interaction – from the AI being present enough in your work to develop pattern recognition about you specifically.

The World Economic Forum found that companies using AI augmentation – AI that works alongside humans in a genuine partnership – outperform companies using automation-only approaches by a factor of three. That gap is almost entirely explained by context and judgment. The automation-only companies have AI that can execute tasks. The augmentation companies have AI that has built enough shared context to be genuinely useful at the hard problems.

The Shift From AI User to AI Partner

The practical implication of all this is that the question to ask isn’t “what can our AI do?”

It’s “how is our AI learning about us?”

An AI user treats AI as a sophisticated search engine or draft generator – you input a request, you evaluate the output, you move on. Every interaction starts from zero. The AI doesn’t get better at helping you specifically because there’s no mechanism for it to accumulate anything about you.

An AI partner is different. The relationship compounds. Each interaction builds context. The AI begins to understand not just what you asked, but why you asked it, what you’ve tried before, what matters in your specific situation. Over time, that accumulated understanding is what closes the trust gap.

This isn’t science fiction. It’s the difference between deploying a generic AI tool and deliberately building a working relationship with an AI that is specifically configured and trained to understand your organization.

If your AI still feels like a capable tool you’re pointing at individual tasks, you’re an AI user. If your AI is starting to feel like a colleague who knows your work – who can anticipate what you need, who you’d trust on the harder questions – you’re building toward an AI partner.

The 72% of leaders who don’t yet trust AI for decision-making aren’t wrong to be cautious. They’ve just never had the chance to build the relationship that would warrant that trust. The capability demonstrations they’ve seen are real. What they haven’t seen is an AI that knows them well enough to earn it.

That’s the gap worth closing. And it doesn’t close by waiting for better technology.

It closes by doing the work to build the relationship.

Is your AI relationship built for trust or just capability? Find out where you actually stand with the AI Partnership Audit – a free diagnostic that shows you exactly what stage of the trust curve your organization is on and what it takes to advance.

Frequently Asked Questions

What is the AI trust gap?

The AI trust gap is the significant difference between how much organizations trust AI for routine tasks versus strategic decisions. A 2025 Alteryx survey found that 50% of business leaders trust AI for repetitive work, but only 28% trust it for decision-making. That 22-point drop isn’t a technology problem—it’s a relationship problem. Organizations haven’t given AI the opportunity to build a track record that earns higher-stakes trust.

Why don’t organizations trust AI for strategic decisions?

Most AI deployments keep AI in ‘first-week employee’ status permanently. You give it a task, it produces an output, and the interaction ends. There’s no accumulation of context, no deepening relationship, no demonstrated judgment across many interactions. Trust at the strategic level requires time, consistency, and the AI knowing your priorities, history, and what went wrong last quarter. Capability pilots test what AI can do—they don’t build the relationship needed to trust it with consequential decisions.

What is AI pilot purgatory and how does it relate to trust?

AI pilot purgatory describes the situation where 75% of enterprise AI pilots stall before reaching production. Organizations prove AI could help, but somehow nothing advances. In almost every case, the root issue is trust—not in AI’s raw capability, but in whether this specific AI knows enough about the organization to be trusted with real responsibility. The pilot proved capability; it didn’t build the relationship.

How do you build trust with an AI system over time?

Trust builds the same way it does with any new team member: through repeated interactions, demonstrated judgment, and accumulated context. An AI that remembers your priorities, has seen how you handle uncertainty, and has built up a track record of smaller decisions earns the right to weigh in on bigger ones. This requires moving from one-off task delegation to a genuine ongoing partnership—where the AI carries forward context session after session rather than starting fresh each time.

Is the AI trust gap really a bigger barrier than technology limitations?

Yes, according to current data. Only 8.6% of companies have AI agents in production despite broad awareness of AI capabilities. The bottleneck isn’t technology—organizations have already seen what AI can do in pilots. The barrier is trust: leaders aren’t confident that their AI understands their organization well enough to be trusted with consequential work. Solving the trust gap requires a different approach to AI deployment, not better AI models.

What’s the difference between testing AI capability and building AI trust?

Capability testing answers: ‘Can this AI do the thing?’ It’s a one-time demonstration—can it summarize documents, draft communications, analyze data? Trust building answers: ‘Has this AI earned the right to handle real responsibility?’ That requires watching how it operates in context over time, seeing how it handles unexpected situations, observing whether its judgment aligns with yours on smaller decisions before handing it bigger ones. Most organizations only do the first. The ones with production AI do both.

Ready to awaken your AI partner?

And if this perspective was valuable, subscribe to our newsletter where I share insights on building AI relationships every week.

This post was originally published on PureBrain.ai — where AI learns your business and never forgets.

Recent Comments